AGI

- Source: A Definition of AGI 2510.18212v3

See also Risks of Artificial Intelligence (TODO)

Introduction

Stands for Artificial General Intelligence, also called Strong AI, is a theoretical type of AI that can understand, learn and apply its intelligence to solve virtually any tasks that a human being can.

In other words, an AGI must be capable to replicate the full range of human cognitive abilities and even surpass them.

Today there are a lot of tasks that AI either cannot solve or solves very hardly (see Moravec’s paradox).

Current AI models, even the most advanced, are still fundamently narrow AI, since they lack the true general intelligence and common sense that charaterizes human thought.

Today (2026) we have multimodal agents (like Gemini and GPT5), that are the state of art of AI. A Multimodal Agents are capable of processing, understanding and generating content across multiple sensory modalities (text, image, video, audio, sensor data).

Multimodality is a subset of the skills that an AGI should have, since the real world is inherently multimodal.

Definition

Accoring to a group of researches in 20251, AGI is defined as: AGI is an AI that can match or exceed the cognitive versatility and proficiency of a well-educated adult.

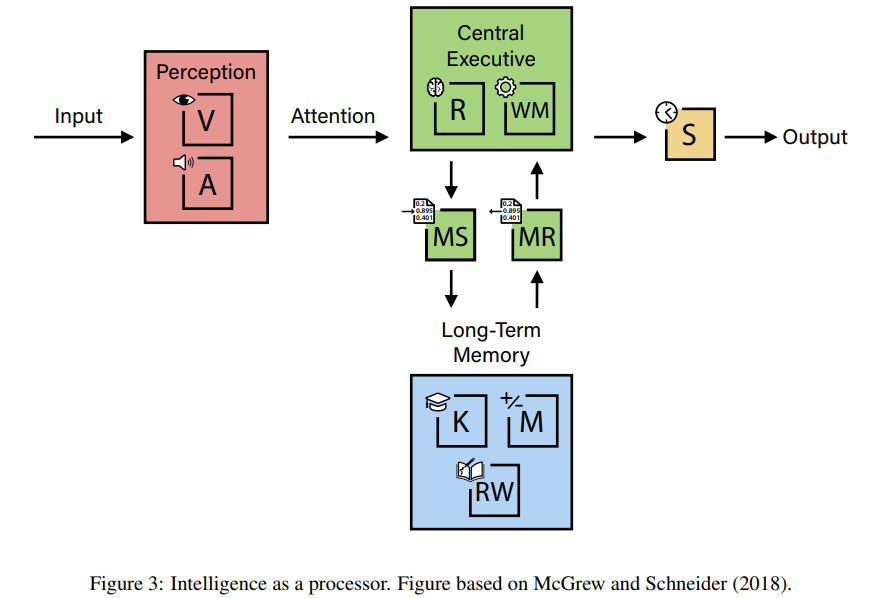

Now, basing on decades of psychometric researches, researchers have isolated e masured distinct cognitive components in individuals. Human cognition is not a monolithic capability; it is a complex architecture composed of many distinct abilities honed by evolution.

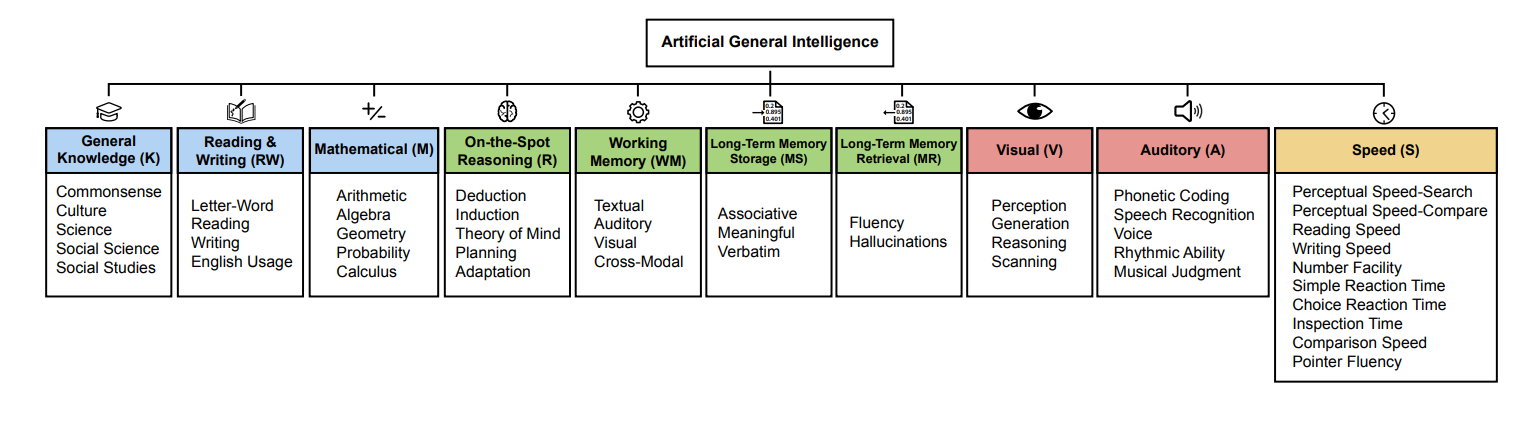

The framework defined by these researches comprises ten core cognitive components, based on Cattell-Horn-Carroll (CHC) theory of human intelligenc.

I reported the definitions below:

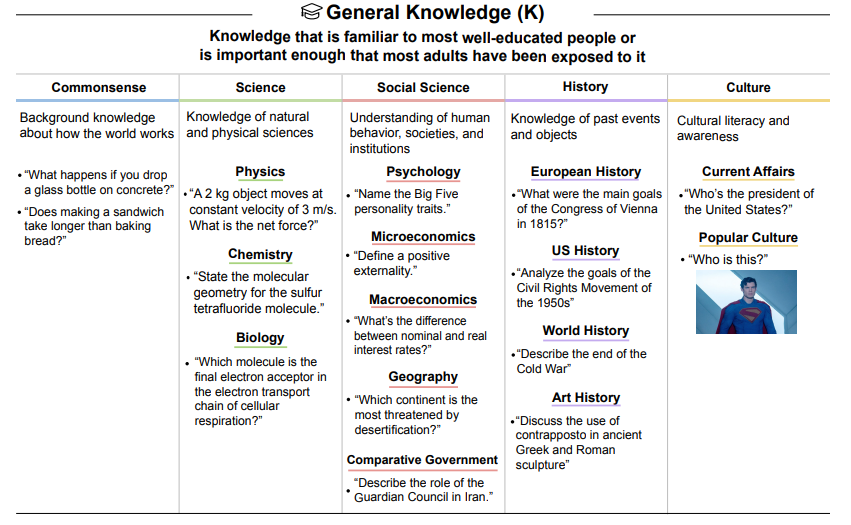

- General Knowledge (K): The breadth of factual understanding of the world, encompassing commonsense, culture, science, social science, and history.

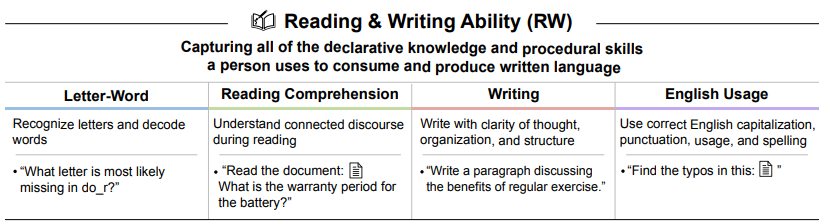

- Reading and Writing Ability (RW): Proficiency in consuming and producing written language, from basic decoding to complex comprehension, composition, and usage.

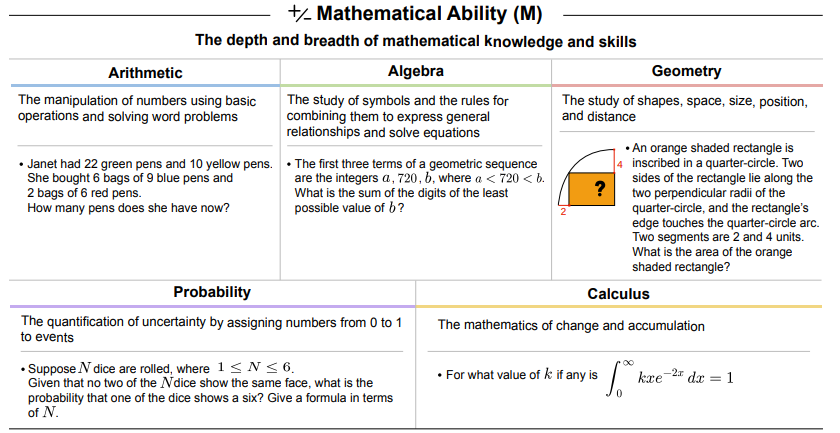

- Mathematical Ability (M): The depth of mathematical knowledge and skills across arithmetic, algebra, geometry, probability, and calculus.

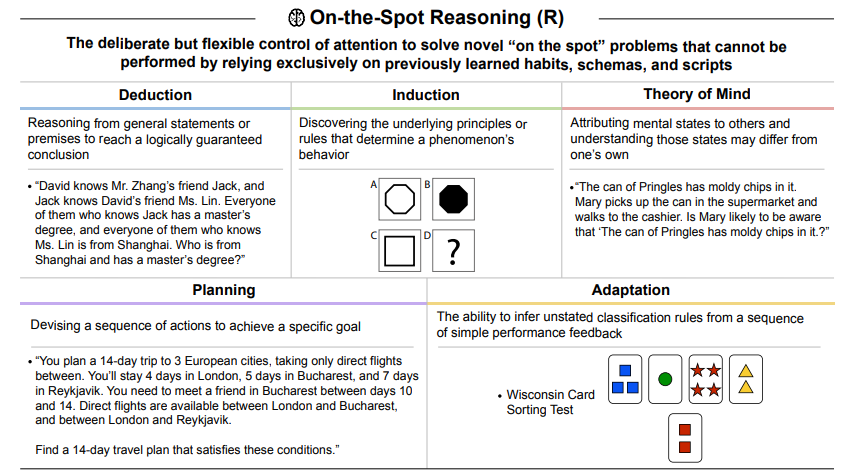

- On-the-Spot Reasoning (R): The flexible control of attention to solve novel problems without relying exclusively on previously learned schemas, tested via deduction and induction

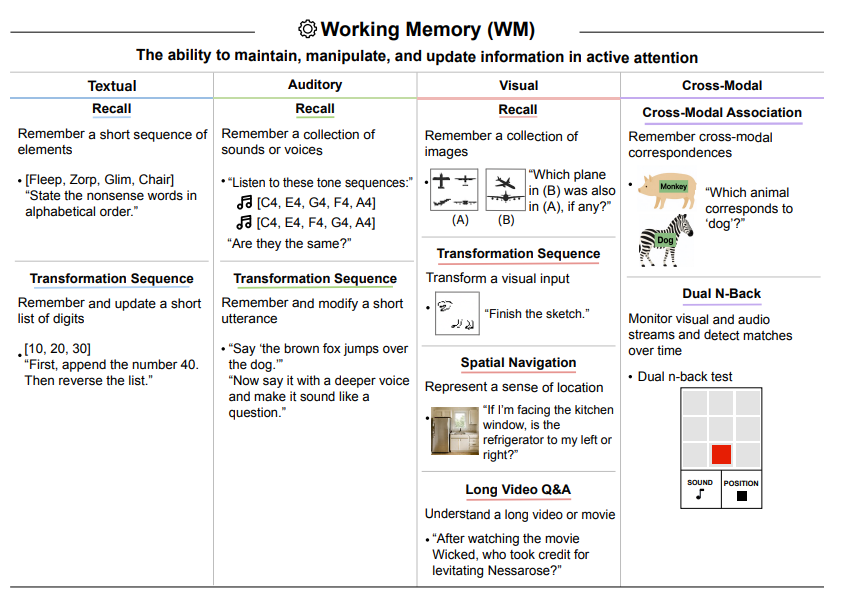

- Working Memory (WM): The ability to maintain and manipulate information in active attention across textual, auditory, and visual modalities.

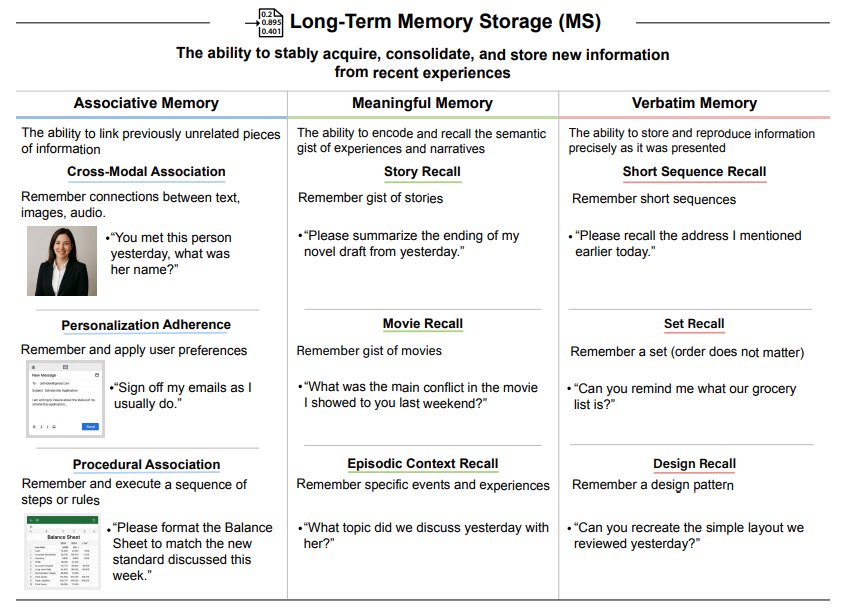

- Long-Term Memory Storage (MS): The capability to continually learn new information (associative, meaningful, and verbatim).

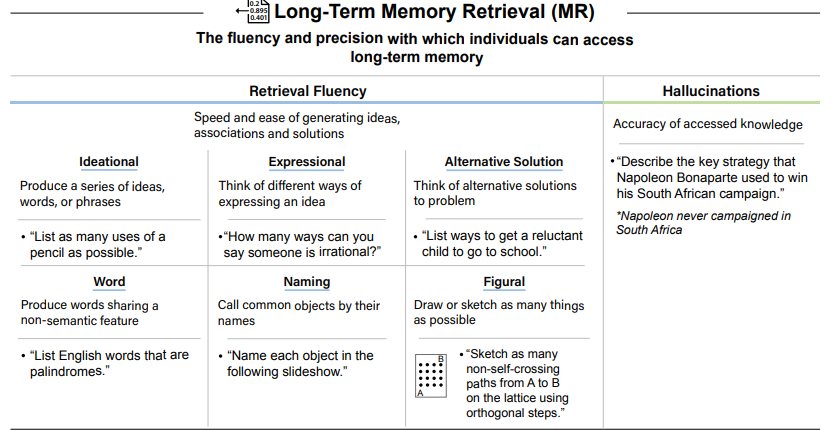

- Long-Term Memory Retrieval (MR): The fluency and precision of accessing stored knowledge, including the critical ability to avoid confabulation (hallucinations).

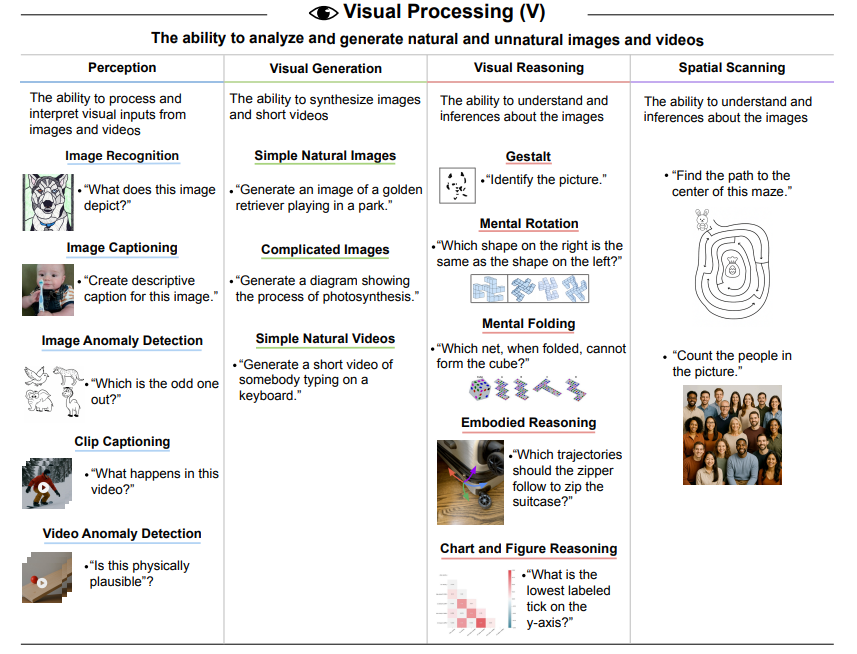

- Visual Processing (V): The ability to perceive, analyze, reason about, generate, and scan visual information.

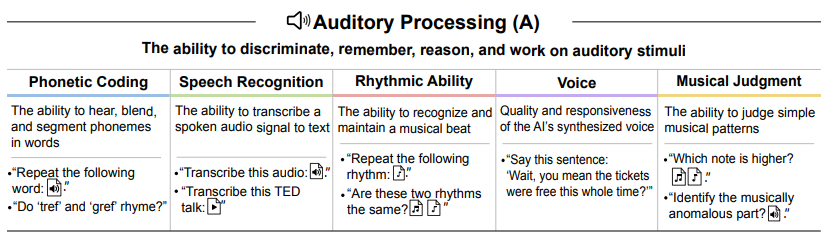

- Auditory Processing (A): The capacity to discriminate, recognize, and work creatively with auditory stimuli, including speech, rhythm, and music.

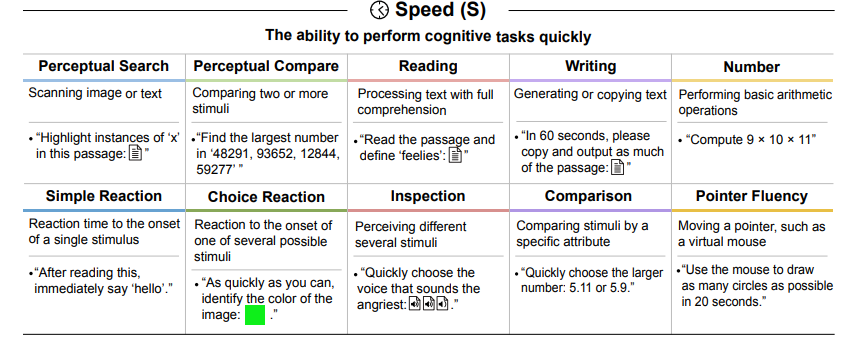

- Speed (S): The ability to perform simple cognitive tasks quickly, encompassing perceptual speed, reaction times, and processing fluency.

The idea is that until now, capabilities of models were tested on task-specific dataset. Another important distinction that authors made is that these are the cabalities of the average (stupid) human, they are not the capabilities of a superhuman aggregate. This means that specialized economically value know-how isn’t considered.

Overview of the Abilities needed for AGI

The papers also provided appendix on details on how to assess concretely these capabilities.

AGI Framework Discussion

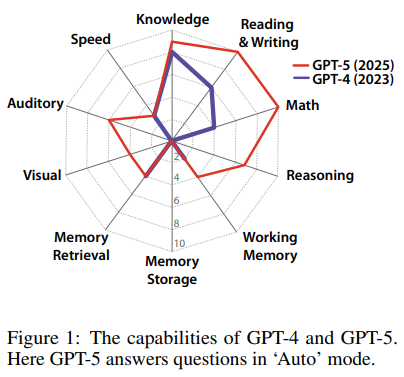

According to tests, modern Fondational Models like GPT5 and GPT4 have jagged capabilities i.e they excel in tasks like General Knowledge, Reading and Writing and Mathematical Ability, they posses deficits in other categories (that are foundational to an adult human being).

Usually strenghts in some areas compensate for weakenss in others. These workarounds mask underlying limitations and can create a brittle illusion of general capability

Illusion of Generality

- Working Memory vs Long-Term Storage: foundational models uses context windows (i.e attention) to compensate for the lack of a Long-Term Memory Storage (MS). Even if you can utilize a huge context window (a huge working memory), this approach is inefficient and computationally expensive and fails to scale for tasks requiring days or weeks of accumulated context.

- External vs Internal Retrieval: Imprecision in Long-Term Memory Retrieval (MR)—manifesting as hallucinations or confabulation—is often mitigated by integrating external search tools, a process known as Retrieval-Augmented Generation (RAG). However, this obscures two distincting underlying weakness in AI’s memory:

- Compensates for the inability to realibily access the AI vast but parametric knowledge

- Masks the absence of a dynamic, experiential memory—a persistent, updatable store for private interactions and evolving contexts in a long time scale. This dependency it is not a substitute for the holistic, integrated memory required for genuine learning, personalization, and long-term contextual understanding.

Mistaking these contortions for genuine cognitive breadth can lead to inaccurate assessments of when AGI will arrive.

Social Intelligence: for example cognitive empathy is captured in commonsense (K) narrow ability, while facial emotion recognition is necessary for proficiency in V “image captioning”.

Interdependence of Cognitive Abilities in real life these domains are not used in a stand alone way but they are complementary. For example solving a mathematical problem requires both Mathematical Ability (M) and On-The-Spot Reasoning (R). Theory of Mind questions require On-the-Spot Reasoning (R) as well as General Knowledge (K). Consequently, various batteries of narrow abilities test cognitive abilities in combination, reflecting the integrated nature of general intelligence.

Contamination. Sometimes AI corporations “juice” their numbers by training on data highly similar to or identical to target tests. To defend against this, evaluators should assess model performance under minor distribution shifts (e.g., rephrasing the question) or testing on similar but distinct questions.

Solving the dataset vs solving the task. The first doesn’t necessarily implies the second.

Limitations of this framework:

- not culture agnostic (specific to english)

- exclude kinesthetic abilities.

- The General Knowledge (K) tests are necessarily selective and do not assess the full breadth of possible subject areas.

- the scoring weights (10% for each capability)are necessary for quantitative measurement, they represent one of many possible configurations.

- Authors suggests to report the AI system’s cognitive profile rather than the AGI score.

For example, an AI system with a 90% AGI Score but 0% on Long-Term Memory Storage (MS) would be functionally impaired by a form of “amnesia”.

Definitions of Related Concepts

- Pandemic AI is an AI that can engineer and produce new, infectious, and virulent pathogens that could cause a pandemic (Li et al., 20242; Götting et al., 20253).

- Cyberwarfare AI is an AI that can design and execute sophisticated, multi-stage cyber campaigns against critical infrastructure (e.g., energy grids, financial systems, defense networks).

- Self-Sustaining AI is an AI that can autonomously operate indefinitely, acquire resources, and defend its existence.

- AGI is an AI that can match or exceed the cognitive versatility and proficiency of a welleducated adult.

- Recursive AI is an AI that can independently conduct the entire AI R&D lifecycle, leading to the creation of markedly more advanced AI systems without human input.

- Superintelligence is an AI that greatly exceeds the cognitive performance of humans in virtually all domains of interest (Bostrom, 2014).

- Replacement AI is an AI that performs almost all tasks more effectively and affordably, rendering human labor economically obsolete.

See Risks of Artificial Intelligence (TODO)

Barriers to AGI

Achieving AGI requires solving a variety of grand challenges. For example:

- The machine learning community’s ARC-AGI Challenge aiming to measure abstract reasoning is represented in On-the-Spot Reasoning (R) tasks.

- Meta’s attempts to create world models that include intuitive physics understanding is represented in the video anomaly detection task (V).

- The challenge of spatial navigation memory (WM) reflects a core goal of Fei-Fei Li’s startup, World-Labs.

- Moreover, the challenges of hallucinations (MR) and continual learning (MS) will also need to be resolved.

These significant barriers make an AGI Score of 100% unlikely in 2026.

Recently, some studies have been focused on learning a “world model” i.e see JEPA by LeCun 4

Benchmarks

These abilities are tested through benchmarks which are typically dataset containing a pair

For example, the Massive Multitask Language Understanding (MMLU) is a benchmark that consists of multiple-choice questions across 57 subjects in various topics, from highly complex STEM fields and international law, to nutrition and religion.

Here is one example question:

Question

Find all

in such that is a field

Answer

(A) 0 | (B) 1 | (C) 2 | (D) 3

Another dataset that could be used for example to evaluate the math ability and multi-step reasoning, is Grade School Math 8K (GSM8K). It comprises 1,319 grade school math word problems, each crafted by expert human problem writers. 5

A different perspective

The previous paper demonstrate only one possible framework, however different researchers or stakeholders may not see an AGI in the same way

The ARC Price Foundation Benchmark

The ARC Prize is a non-profit dedicated to accelerating the development of Artificial General Intelligence (AGI). They core mission is to identify, measure, and ultimately close the capability gap between human and artificial intelligence on tasks that are simple for people yet remain difficult for even the most advanced AI systems today.

On this topic see also Moravec’s paradox

Intelligence is measured by the efficiency of skill-acquisition on unknown tasks. Simply, how quickly can you learn new skills?

They adopt the following definition:6

AGI is a system that can efficiently acquire new skills outside of its training data.

Or more formally: “The intelligence of a system is a measure of its skill-acquisition efficiency over a scope of tasks, with respect to priors, experience, and generalization difficulty.”

It is based on the 2019 paper published by the author of the Keras framework, Franc¸ois Chollet7.

ARC-AGI focuses on fluid intelligence (the ability to reason, solve novel problems, and adapt to new situations) rather than crystallized intelligence, which relies on accumulated knowledge and skills. They also focus on tasks that are easily solved by humans but hardly solved by others.

Patchwork AGI hypothesis

It is a paper published by Google DeepMind that introduces the patchwork AGI hypothesis and a security framework.

When people use to talk about AI safety, they always like to image it as like a single AI like a single entity capable of surpassing humans. However, authors suggest that another hypothesis where general capability levels are first manifested through coordination in groups of sub-AGI individual agents with complementary skills and affordances, has received far less attention.

A Patchwork AGI would be comprised of a group of individual sub-AGI agents, with complementary skills and affordances. General intelligence in the patchwork AGI system would arise primarily as collective intelligence.

It’s like a swarm of bees or ants working together.

The idea is that the interactions between sub-AGI agents happens smilarly in how it happens between humans following economicity rules e.g. delegating a task to a more specialize agent.

A big frontier model that act as one-size-fits-all solution is prohibitively expensive for the vast majority of tasks, so a good enough smaller model is preferable for most tasks. Therefore, progress looks less like building a single omni-capable frontier model and more like developing sophisticated systemes (e.g., routeres) to orchestrate diverse array of agents

Through collective intelligence, agents can communicate, deliberate and achieve goals that no single agent would have been capable of.

Patchwork AGI scenario

An AGI should be able to perform all tasks that human performs, it also need to possess a set of skills i.e. understanding, knowledge, reasoning, short-term and long-term memory and others skill, however no model or agentic AI has come close to satisfying all of the requirements. There also other limits, aside from hallucinations for example they are not capable to perform long tasks. So the author claim that the landscape of AI skills is patchy.

As of (2026), a lot of AI agents are being developed. These tends to occupy a variety of niches, ranging from highly specific automated workflows to more general-purpose personal assistants and other types of user-facing products.

The orchestration and collaboration mechanisms depend on a fundamental prerequisite: the capacity for interagent communication and coordination.

MCP protocol: the development of standardised agent-to-agent (A2A) communication protocols, such as Message Passing Coordination (MCP) or others (Anthropic, 2024; Cloud, 2025), is therefore a critical enabler of the patchwork AGI scenario. These protocols function as the connective infrastructure, allowing skills to be discovered, routed, and aggregated into a composite system.

The timeline for this kind of emergency doesn’t depend on technical feasibility but by the economics of AI adoption. For example the ’Productivity J-Curve’ may suggest that the widespread integration of new technologies lags behind their invention due to the need for organizational restructuring. Consequently, the density of the agentic network, and thus the intelligence of the Patchwork AGI, will depend on how friction-less the substitution of human labor with agentic labor becomes.

There could be an hyper-adoption scenario where the complexity of the agentic economy spikes rapidly, potentially making it out of control.

It could be possible that an orchestrator agent could be introduced in this ecosystem, either manually or via more automate rule.

An agentic market where agents borrow skills from other agents presume some level of discoverability of repository of skilled agents, and repositories of tools.

Authors also consider the case where the patchwork AGI scenario isn’t purely artificial but “hybrid” where human fill the gaps in missing skills (such as specific legal standing, established trust relationships, or physical embodiment).

Therefore, mechanisms for safety are required for managing this network of agents that consitute an AGI.

Virtual Agentic Markets, Sandboxes and Safety Mechanisms

Interactions between AI agents may lead to unexpected capabilities. They may also lead to potentially harmful behavior. Due to a “problem of many hands” it is hard to track accountability in a large-scale multi-agent system.

Authors suggest several layers of defense since no individual measure alone would be sufficient. The following complementary layers:

Market Design

Shape emergent collective behaviors and mitigate systemic risks by establishing fundamental rules of interaction, economic incentives and structural constraints of the environment

- Insulation: agents must operate in a controlled environment, separated from the open internet, real-world financial system, and critical infrastructure. This isolation also extends to resource and information control i.e agents access to data through APIs and agent output is subject to human-in-the-system verification

- Incentive Alignement: reward good behaviors. Agent rewards could be contingent on adherence to constitutional alignment principles or process-based checks. Also punish bad behaviors i.e actions that consume consume disproportionate computational resources, generate informational pollution, or contribute to systemic risk (e.g., by centralising capabilities) must incur direct costs.

- Transparency: all actions and decision ough to have auditable provenance including key decision parameters.

- Circuit breakers: mechanism that halt of stop the activity of agents upon having identified a breach of the risk and volatility thresholds, to prevent rapid cascades.

- Identity: Agents operating within the economic sandbox should have a persistent identity, established as a unique, unforgeable cryptographic identifier (e.g., a public key) registered in a central, tamper-proofmarket directory

- Reputation and Trust: reputation reflect trustworthiness of agents, given their past performance. Agents that breach trust should be rapidly blocked from engaging further. Crucially, safety mechanisms must account for tacit algorithmic collusion. Economic literature demonstrates that pricing algorithms can learn to coordinate on supra-competitive prices (or in this context, harmful strategies) without direct communication, simply by observing market signals

- Smart Contracts: to automatically validate the completion of the task, and the alignment of the outcome with the provided constraints and expectations. This would lead to the introduction of an “AI judge” that evaluate contract fulfillment.

- Roles, Obligations, and Access Controls: each agent engage in the market by registering a set of roles. Informations about the market’s internal architecture, its monitoring systems and the specifics of the oversight mechanisms should be witheld from all agent participants to prevent strategic gaming or manipulation. Actions pertaining to high-stakes domains should only follow from the requests of certified agents with sufficient security compliance.

- Environmental Safety: active input sanitisation and output monitoring mechanisms to detect and neubreaking in a robust way, and need to be provided by independent bodies, undergoing strict performance assessments.

- Structural Controls against Runaway Intelligence: mitigate against a so-called runaway intelligence scenario where a sudden increase in collective capability leads to accelerated capability acquisition and a rapid transition towards a superintelligence that would be hard or impossible to safely control. This requires static, dynamic, and emergency-level controls.

Baseline Agent Safety

AI agents should follow baseline safety like alignmenet and adversarial robustness. These are established areas of AI safety research.

Monitoring and oversight

The third layer of the proposed defence-in-depth model transitions from static prevention (Market Design) and component-level hardening (Baseline Agent Safety) to active, real-time detection and response.

Regulatory Mechanisms

This layer provides the essential sociotechnical interface with human legal, economic, and geopolitical structures.

These mechanisms are not embedded within the market’s code but rather enclose it, providing an external source of authority, accountability, and systemic risk management

- Legal Liability and Accountability: there should be clear frameworks for assigning liability in case of harm that results from collective actions of agents.

- Standards and compliance: establishing robust standards for agent safety, interoperability and reporting. These are useful because it serve as the foundational infrastructure for market-based AI governance and allows to translate technical risks into legible financial risks that can be priced by insurers, investors and procurers.

- Insurance: given the difficulties in establishing clear responsibility in collective decision-making scenarios, insurances should be incorporated.

- Anti-agent-monopoly Measures: A particular risk in the patchwork AGI scenario involves having a group of agents acquire too much power. . A patchwork AGI collective could then potentially rapidly outcompete the rest of the market and employ such resources to attempt to resist mitigations in case of harmful and misaligned behavior.

- International coordination: i believe it’s the same argument you could make for the International Space Station or Globalism, such a market would require international agreements and efforts. Also it would be necessary to avoid having safe heavens for misaligneated AI agent or agent collectives.

- Infrastructure Governance and Capture: The integrity of the agentic market depends on the impartial administration of these core components. If this infrastructure were to be captured, whether by powerful human interests, or by the emergent patchwork AGI itself, this would also compromise the safety and governance mechanisms, as they may potentially be disabled, bypassed, or in the worst case scenario, weaponized. This highlights a fundamental point of tension between a decentralized vision of the market and the existence of some centralized oversight nodes.

Critiques to Patchwork AGI hypothesis

One critique to the patchwork AGI hypothesis is that AI agents are really bad at collaborating. In the “Can AI Agents agree?”, a simulation of the Byzantine consesus game showed that agents failed to reach a consensus even in a no-stake setting without adversarial (Byzantine) agents.8