AI - Lecture - Introduction and Agents

- Source: Book Artificial Intelligence A Modern Approach 4 edition global edition - Russel - Chapter 3

Artificial Intelligence Overview

Artificial Intelligence (AI) is the study of ideas, techniques, and algorithms that form the foundation for building autonomous systems capable of performing tasks that typically require intelligence.

Core Pillars of AI

- Search: Finding solutions to problems (e.g., pathfinding, game strategies like tic-tac-toe).

- Knowledge: Representing information and drawing inferences.

- Uncertainty: Dealing with events that occur with a given probability.

- Optimization: Selecting the best path or solution among multiple alternatives to maximize a goal.

Defining Intelligence

Intelligence is difficult to define uniquely but generally involves a set of capabilities:

- Learning and thinking.

- Understanding and communicating.

- Self-consciousness and building abstract models of the world.

- Planning and adapting to novel conditions.

Four Approaches to AI

AI research is categorized by whether the goal is to mimic human processes or achieve ideal rationality:

- Thinking Humanly: Cognitive Science approach. Focuses on internal activities of the brain and information-processing psychology.

- Acting Humanly: The Turing Test (1950) approach. A machine passes if a human interrogator cannot distinguish it from a human. Requires knowledge representation, reasoning, language understanding, and learning.

- Thinking Rationally: “Laws of Thought” approach. Uses logic and formal syllogisms (Aristotle) to codify “right thinking” via irrefutable reasoning processes.

- Acting Rationally: The Rational Agent approach. Focuses on designing systems that “do the right thing” to maximize goal achievement given available information.

A Brief History of AI

- 1940-1950: Early days. McCulloch & Pitts (1943) Boolean neuron model; Hebbian learning (1949); Turing’s “Computing Machinery and Intelligence” (1950).

- 1956: Dartmouth Workshop. AI officially born as a discipline (McCarthy, Minsky, Newell, Simon).

- 1952-1969: Early enthusiasm. Logic Theorist, General Problem Solver, Checkers program (Samuel), Lisp.

- 1966-1973: First AI Winter. Collapse due to slow advancements, non-scalable systems (combinatorial explosion), and fundamental limits of representations (Perceptrons by Minsky & Papert).

- 1969-1970s: AI Revival. Knowledge-based approaches (DENDRAL, MYCIN) and Expert Systems.

- 1980s: AI Industry boom (Expert systems, Japan’s Fifth Generation Project) followed by the second “AI Winter” (1988-1993) as systems failed to meet high expectations.

- 1990-Present: The AI Spring.

- 1986: Connectionist revival (Backpropagation).

- 1990s: Statistical approaches and probability.

- 2000s: Big Data, Deep Learning, and massive computing power.

- Key Milestones: Deep Blue (1997), Watson (2011), AlphaGo (2016), ChatGPT (2023).

Ethical and Philosophical Issues

- Weak AI: Machines can emulate intelligence (Chinese Room argument).

- Strong AI: Machines can be intelligent (Turing’s view).

- Gorilla Problem:

- The risk of biological species losing control to a superior artificial intelligence.

- Development of an artificial superintelligence that surpasses human intelligence may pose a significant risk

- Risks: Lethal autonomous weapons, biased decision-making, unemployment, and cybersecurity threats.

Intelligent Agents

AI focuses on building rational agents, i.e., agents that choose the best possible action based on what they know. An agent doesn’t need to be perfect, just optimal given its knowledge

An agent is anything that perceives its environment through sensors and acts upon it through actuators. Agents include humans, robots, softbots, thermostats.

Key Definitions

- Percept: The agent’s perceptual inputs at any given instant.

- Percept Sequence: The complete history of everything the agent has perceived.

- Agent Function: An abstract mathematical description (

) mapping percept sequences to actions. - Agent Program: The concrete implementation of the agent function running on the physical architecture.

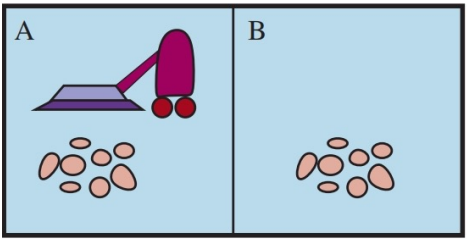

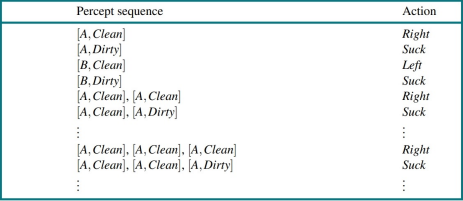

Example Robot vacuum-cleaner

- The sensor detect dirt

- the actuator moves and cleans

- the agent function: if dirty then clean.

- The world consists of squares either dirty or clean. The agent usually starts in A:

- It can perform the following actions: Left, Right, Suck, NoOp

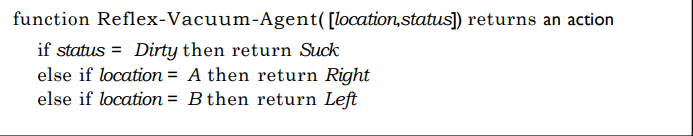

It can also be defined in a small agent program:

Defining a good behavior

An agent in a environment generates a sequence of actions according to its percepts. This sequence causes the environment to go through the sequence of states.

The desirability is captured by a performance measure:

- It evaluates any given sequence of environment states

- Vacuum-cleaner agent:

- one point per square cleaned up in time

. - one point per clean square per time step, minus one per move (energy cost)

- Penalize for

dirty squares

- one point per square cleaned up in time

Rationality

A Rational Agent chooses the action that maximizes the expected value of its performance measure, given its percept sequence and built-in knowledge.

Elements of Rationality:

- Performance Measure: The criterion for success.

- Prior Knowledge: What the agent knows about the environment.

- Actions: What the agent can perform.

- Percept Sequence: History to date.

Rational requires exploration, learning and autonomy:

- Exploration is needed when the rational agent gathers information from an initially unknown environment

- Learning make it possible for rationale agents to learn from what it perceives

- A rational agent should be autonomous, it should learn what it can to compensate for partial or incorrect prior knowledge

Rationality is NOT omniscience (knowing the actual outcome) or clairvoyance. Rationality doesn’t necessarily translates automatically into successfull: suppose the forecast is for no rain but one brings her umbrella and uses it to defend herself against a dog attack. Is that rational? No, although successful, it was done for the wrong reason.

Agents don’t have to be successfull, and they don’t have to know everything, just do a good job with what they know and want.

Task Environments (PEAS)

The task environments are the problems for which the agents are the solutions. When designing an agent, the first step must always be to specify the task environment as fully as possible.

To design an agent, the task environment can be specified using the PEAS framework:

- Performance measure: e.g., safety, speed, profits for an automated taxi.

- Environment: e.g., city streets, weather, pedestrians.

- Actuators: e.g., steering, accelerator, brake, display.

- Sensors: e.g., cameras, LiDAR, GPS, keyboard.

Example: Internet Shopping Agent: an agent can also be software. For example an Amazon-like agent that search products, compare prices and suggest best option to buy.

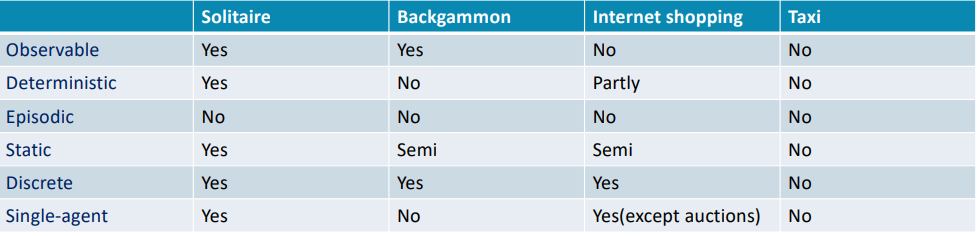

Properties of Task Environments

Task environments vary along several significant dimensions that determine the appropriate agent design and the applicability of each of the principal families of techniques for agent implementation

- Fully vs. Partially Observable: Whether sensors detect all aspects relevant to the choice of action.

- Single-agent vs. Multiagent: Whether the environment contains other agents (competitive or cooperative).

- Deterministic vs. Nondeterministic (Stochastic): Whether the next state is completely determined by the current state and the agent’s action.

- Episodic vs. Sequential: Whether the agent’s experience is divided into independent atomic episodes.

- Static vs. Dynamic: Whether the environment changes while the agent is deliberating.

- Discrete vs. Continuous: Applied to the state of the environment, time, and the percepts/actions.

- Known vs. Unknown: Whether the “laws of physics” of the environment are known to the agent.

The hardest case is: partially observable, multiagent, nondeterministic, sequential, dynamic, continuous, and unknown. For example medical diagnosis:

- Partially observable: you can’t see “inside” the patient perfectly

- Deterministic: Stochastic because the same treatment might work on Patient A but not Patient B

- Episodic: Sequencial because decisions have long-term consequences

- Static/Dynamic: Dynamic because the patient’s health is constantly evolving

- Discrete/Cont. : Continuos because vital signs are real-valued numbers

- Agents: single agent (the disease isn’t an opponent trying to defeat you)

The task environment type largely determines the agent design. The real world is partially observable, stochastic, sequential, dynamic, continuos, multi-agent.

The structure of Agents / Agent architecture

The job of AI is to design an agent program that implemens the agent function i.e. the mapping from percepts to actions.

Agent architecture: we assume this program will run on some sort of computing device with physical sensors and actuators, it is called the agent architecture. Thus agent = architecture + program.

In general, the architecture

- makes the percepts from the sensors available to the program

- runs the program, and

- feeds the program’s action choices to the actuators as they are generated

Agent Types

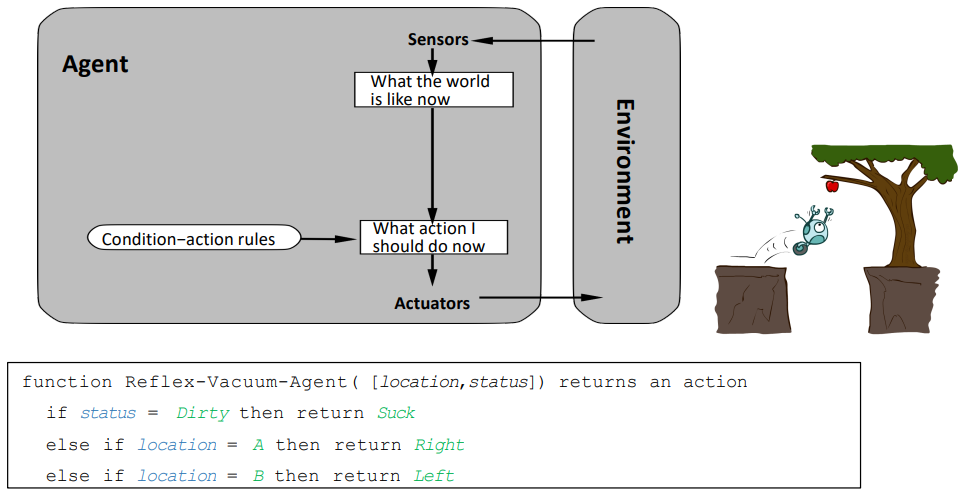

Simple Reflex Agents

- Select actions based only on the current percept, ignoring history.

- Use condition-action rules (e.g., “if temperature > 25 then turn on AC”).

- Only work if the environment is fully observable.

Reflex agents are not capable of learning or making decisions based on their beliefs and desires.

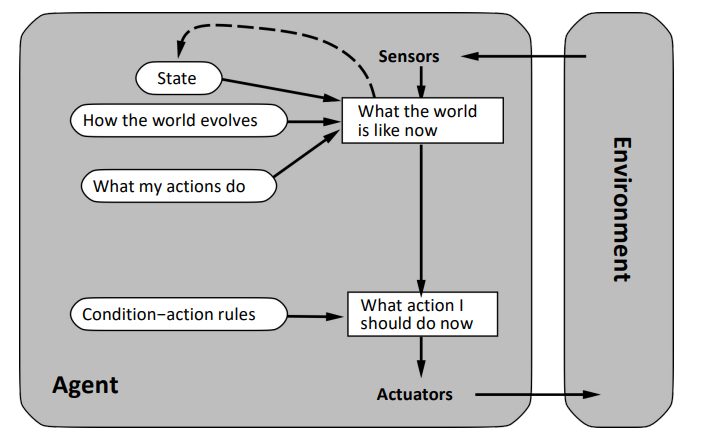

Reflex Agents with State

- Maintain an internal state that tracks aspects of the world not currently visible.

- It uses its state to make more informed decisions by considering the current and past states of the environment

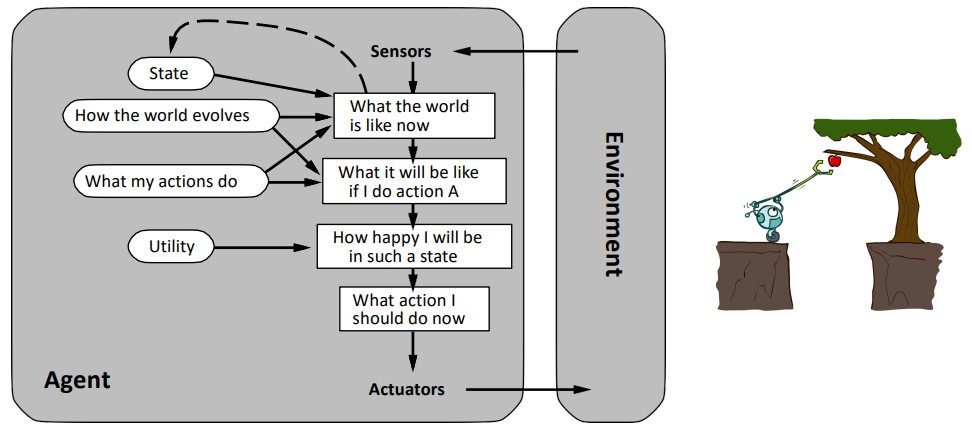

Model-based Reflex Agents

To update the internal state of information during the time, the agent program must encode two kinds of knowledge:

- Transition model: Some information about how the world changes over time, the effects of the agent’s actions and how the world evolves independently of the agent

- e.g., “if i turn the wheel, the car turns”

- Sensor model: Some information about how the state of the world is reflected in the agent’s percepcts

An agent that uses such models is called a model-based agent.

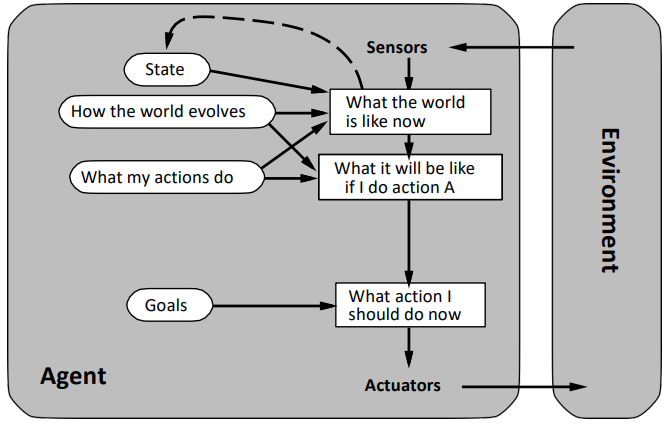

Goal-Based Agents

Knowing something about the current state of the environment is not always enough to decide what to do.

A goal-based agent:

- Use goal information to describe desirable situations.

- Can act more flexibly by considering “what if” scenarios (planning/search).

- Search is a subfield of AI devoted to finding action sequences that achieve the agent’s goals

- Decision-making involves considering the future consequences of actions.

In reflex agents, this information is not explicitly represented because the built-in rules map directly from perceptions to actions.

A goal-based agent is more flexible due to its explicit representation of knowledge, which allows for easier modifications. Consider this example:

- Changing the behavior of a goal-based agent to reach a different destination is as simple as specifying that new destination as the goal

- In contrast, the rules of a reflex agent that determine when to turn or go straight only apply to a specific destination and must be entirely replaced to navigate to a different location

Utility-Based Agents

Goals alone are not enough to generate high-quality behavior in most environments. For example, numerous action sequences can lead a taxi to its destination, achieving the goal. However, some sequences are faster, safer, or more cost-effective than others.

A more general performance measure should allow comparing different states of the world according to the utility they make the agent gain.

A performance measure assigns a score to any given sequence of environment states:

- An agent’s utility function is an internalization of the performance measure

- If the internal utility function and the external performance measure agree, an agent that chooses actions to maximize its utility will be rational acording to the external performance measure.

An utility-based agent has many advantages in terms of flexibility and learning:

- when there are conflicting goals and only some which can be achieved, a utility function enables specifying a suitable tradeoff

- when there are multiple goals for the agent to pursue, none of which can be achieved with certainty. The utility provides a way to weight the likelihood of success against the importance of the goals.

Expected utility:

- Since many real-world domains are partially observable and nondeterministic, the decision-making process operates under uncertainty

- A ratinal utility-based agent chooses the action that maximizes the expected utility of the action results.

- In other words, the expected utility, on average, given the probability and utiity of each outcome

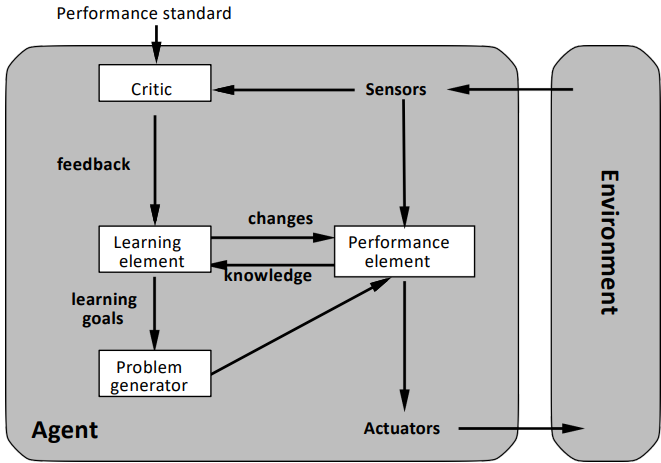

Learning-based Agents

If an agent needs to act in an unknown enviornment, learning is the key to a possible success. It allows an agent to become more competente than its initial knowledge alone.

Consist of four conceptual elements:

- Learning Element: Responsible for making improvements.

- Performance Element: Responsible for selecting external actions (the “classic” agent).

- Critic: Provides feedback on how the agent is doing based on a fixed performance standard.

- Problem Generator: Suggests actions that will lead to new and informative experiences (exploration).