SC - Lezione 19

Checklist

Domande, Keyword e Vocabulary

- Page Rank

- Importance of a Page in Page Rank

- Page Rank’s approximated scaled adjacency matrix

- Power Method

- Efficiently computing

- Markov Chain

- Random Process

- Transition

- Sequence of state probability distribution vectors

- Conditioned probability

- Conditional probability vs Joint Probability

- Example: Markov Chain for weather conditions

- Limit State

- Power Method

- Observation on the limit state and demonstration

- Markov Chain for PageRank

- Markov Chain for text generation

- Hidden Markov Model

- Emission Probabilities Matrix

Appunti SC - Lezione 19

Page Rank

- Continuing Lecture 18 - Page Rank

Rate of convergence of the Power Method

In order to estimate the rate of convergence of the Power Method, applied to the matrix

The smaller the ratio, the faster the convergence.

Efficiently computing

We exploit the fact that

Therefore:

Reducing the problem to linear system

If the problem is of small size, the score vector can be even be computed as solution of the linear system.

Exercises

We have a synthetic web of 20 pages, connections are generated randomly. We interpret this web as a direct graph.

We apply the page rank algorithm

Finally, we execute the script repoted in the Matlab documentation that analyzes the web consisting of the Mathworks site and the first 100 sites linked to it.

Markov Chains

We will show in the next lecture that you can derive the Page Rank Algorithm through the Markov Chains

Markov Chain and random process - Main Idea

A Markov Chain is a random process that undergoes transitions from one state to another within a finite or countable set of states (called state space).

Say we want to model a behavior of a system and this system evolves in time, following a random process. My system can be in one state and this it appartains to a set of certain known state in which the system can be. The system pass from one state to another and this is called transition.

The probability of transitioning to a future state depends solely on the current state, without any influence from the sequence of events that preceded it. This characteristic is known as the Markov property, or memorylessness.

The state can be anything: from weather conditions, to health status of a patient or the web pages. In which web page a user is in a certain step, and so on. Also a text can be generated using Markov Chain.

Components of Markov Chain

- State space

- Transitioning probability matrix

- Initial state distribution vector: a discrete probability distribution over the state space

Observation 1:

Definition

A Markov Chain can be defined as a sequence of (state) probability distribution vectors

with

sbaglio o assomiglia molto al power method?

Alternative definition

Note that the definition of the transition probability matrix

In stochastis cases like this, we may use the probability notation.

The equation says that the conditioned probability of moving to state

Conditional Probability vs Joint Probability:

Recall that from basic probability theory, the joint probability of being on state

is equal to the product of the probability of being on state

- See also Bayes Theorem

Therefore if we have:

PLUS is like an OR. This is the expression is the sum of the probabilities of joint events, since we can get to state

Example: Markov Chain for weather conditions

Let’s consider a system that represents the weather conditions in a certain place. Suppose the system can only stay in one of the following states on a given day: 1-clear, 2-cloudy, 3-rainy. Finally, suppose that the change of state from one day to the next is determined only by the following probabilities, which do not depend on the day, expressed through the transition probability matrix

- The columns represents today state and the rows are tomorrow state.

- The states are 1-clear,2-cloudy,3-rainy.

For example in P(1,1), if today is clear, the probability that tomorrow is clear will be 0.8, that is 80% and so on.

Suppose that i want to know the situation tomorrow, and suppose that i have that

You multiply the matrix

The system can also be represented with a directed graph.

The situation in the day after tomorrow will be

Note that

Limit State

Will this chain eventually converge or not?

Since that

The convergence is called the vector limit, or limit state, and it is the eigenvector corresponding to the dominant eigenvalue

Observation

Whatever the initial state

If we raise

Demonstration

Take any matrix

i can put

Realization of a Markov Chain

In the applications of markov chain, it is often necessary to realize a specific path starting from a given initial condition

We have to pick a characteristic from the distribution.

Supose we have a distribution of the state:

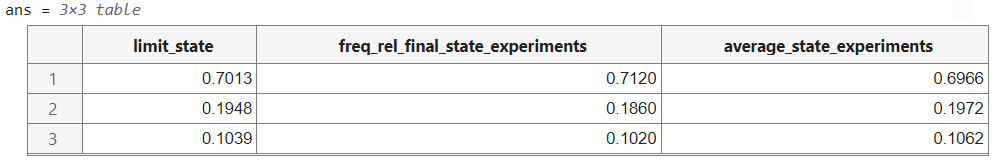

We carry out an experiment involving the weather system analyzed so far.

We consruct

Then i also record the relative frequencies of the three states that have been obtainted during that path. I.e i count how many times i have been in state 1, in state 2 and in state 3.

If i take the average frequencies along all the 1000 experiments, i see that this is very close to the frequencies that i have record along the states. So all the vectors are an approximation of the limit state.

Markov Chain for PageRank

Imagine that i’m a web surfer, i start from any web page in the internet and i decided to walk around the web and i decide to do this randomly.

Component of the Markov Chain for Page Rank

The web is a discrete state space, moving from a web page to another is a transition.

Each web page is a state in the Markov Chain, and we represent with

The transition probability matrix

Teleportation: If i’m in a web page, in the 0.85 percent of the case i will pick randomly one of the outgoing pages. in 0.15 i will just jump to another web page of the web even if this is not connected. This damping factor is called

So we realize that, using previous assumptions, the probability matrix

Markov Chain for Text Generation

Main Idea: take a list of words that will represent the set of state. Assign a probability to pass from word

More formally:

- state space

- Transition Probability Matrix

Say i have a state

The transition probability matrix

Hidden Markov Model

THe HMM extend the Markov Chain by adding the concept of hidden states. It’s a sort of generalization of the Markov Chain.

In an Hidden Markov Model a transition lead to a state that is not directly observable and that it will eventually produce a state that is observable.

The components of a HMM are:

- Hidden State space

- Observation Space

- Transition Probabilities Matrix

- Emission Probabilities matrix

Each hidden state can generate observations

At each step

What are the inputs of a HMM?:

- Initial state distribution

- Transition probability matrix

- Emission probability matrix

- Sequence of observations

Pratical Example of Hidden Markov Chain

The matrix B is:

and so on.

The HMM captures how the current belief about the weather (in terms of the probability distribution over hidden states) is updated based on both the transition probabilities between states and the likelihood of observing the current conditions given these states